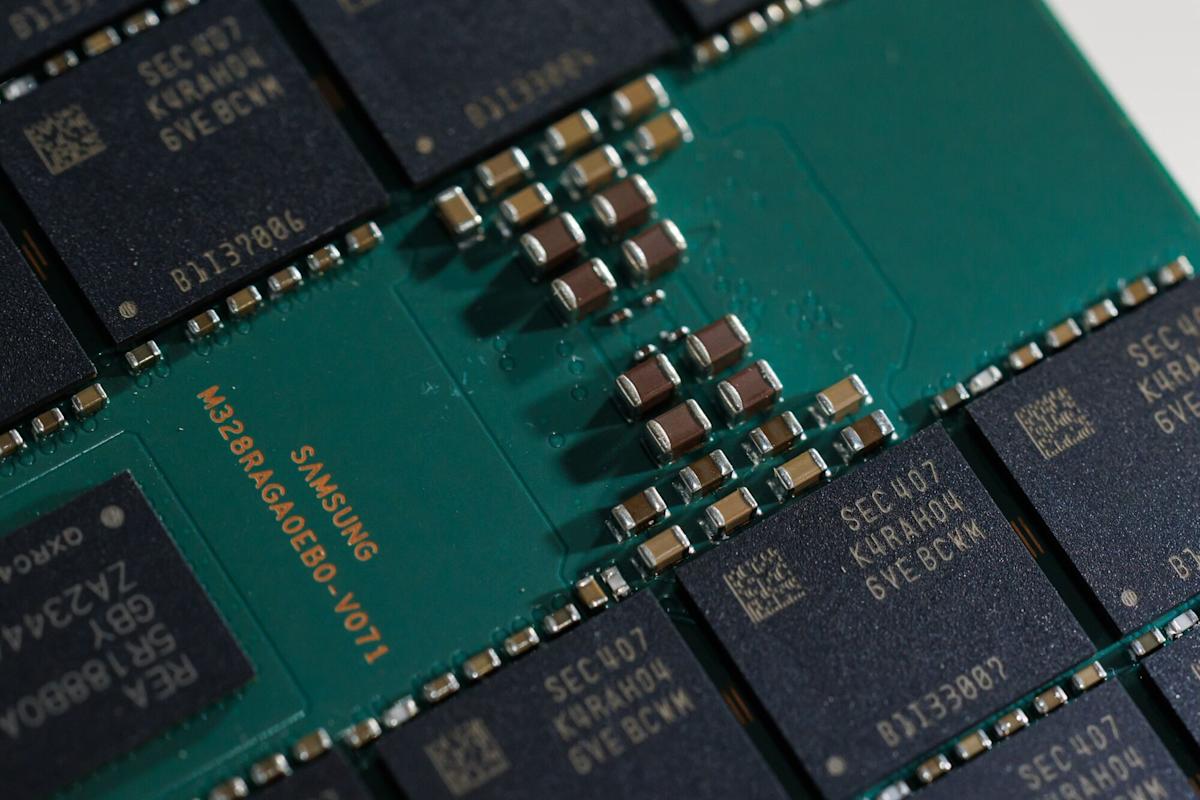

(Bloomberg) — Memory chip stocks extended their losses on Thursday after Alphabet Inc.’s Google publicized research on a new algorithm that could allow more efficient use of the storage needed for artificial intelligence development.

Samsung Electronics Co. and SK Hynix Inc., South Korean leaders in the market, both fell at least 6% in Seoul. In the US, Micron Technology Inc., Western Digital Corp. and Sandisk Corp. all slid at least 7% in US trading, after they closed lower on Wednesday.

Memory chip companies had been on a tear in recent months as surging investment in AI infrastructure led to shortages, triggering a spike in chip prices and profit. SK Hynix and Samsung shares had soared more than 50% this year through Wednesday, while long-time laggard Kioxia Holdings Corp. had more than doubled.

Google’s new technology could alleviate the supply crunch, potentially pushing down prices. The company publicized the research on X this week, although it had originally come out last year.

Google said its TurboQuant algorithm can cut the amount of memory required to run large language models by at least a factor of six, reducing the overall cost of training artificial intelligence. Investors may be concerned that this could reduce the need for memory from hyperscalers, the largest data center operators. That would push down the prices for components that are also used in smartphones and consumer electronics.

Four hyperscalers, led by Amazon.com Inc. and Google, plan to spend about $650 billion this year to build data centers, scooping up Nvidia Corp.’s AI accelerators and related memory chips.

SK Group Chairman Chey Tae-won recently said that the memory chip crunch will last until 2030.

Morgan Stanley analyst Shawn Kim wrote in a note the impact of Google’s research on the industry should be more positive because it affects a critical bottleneck. It improves the efficiency of what’s known as the key value cache used for inference, or running AI models.

“If models can run with materially lower memory requirements without losing performance, the cost of serving each query drops meaningfully, resulting in more profitable AI deployment,” he wrote.

Like many of the bulls in the AI industry and analyst community, he cited a theory known as the Jevons Paradox. It’s a concept from an English economist about coal production stating that the more efficient technology becomes, the more demand will rise.

The 19th century premise was also cited by JPMorgan Chase & Co. and Citigroup Inc. JPMorgan analysts said that investors may take profits on the news, but there’s no near-term threat to memory consumption.