On a Saturday morning, you head to the ironmongery shop. Your neighbors’ Ring cameras movie your stroll to the automobile. Your automotive’s sensors, cameras and microphones document your pace, the way you power, the place you’re going, who’s with you, what you assert, and organic metrics corresponding to facial features, weight and middle price. Your automotive might also acquire textual content messages and contacts out of your attached smartphone.

In the meantime, your telephone steadily senses and information your communications, information about your well being, what apps you’re the use of, and tracks your location by the use of cellular towers, GPS satellites and Wi-Fi and Bluetooth.

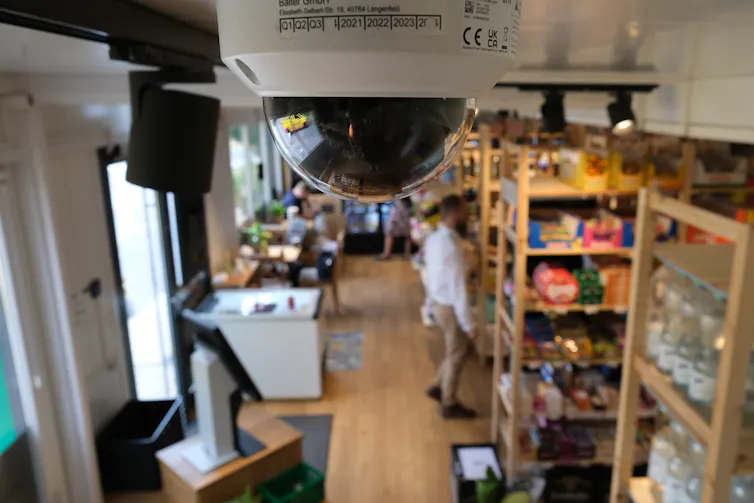

As you input the shop, its surveillance cameras determine your face and observe your actions throughout the aisles. In case you then use Apple or Google Pay to make your acquire, your telephone tracks what you purchased and what sort of you paid.

All this information temporarily turns into commercially to be had, purchased and bought by means of knowledge agents. Aggregated and analyzed by means of synthetic intelligence, the information unearths detailed, delicate details about you that can be utilized to expect and manipulate your habits, together with what you purchase, really feel, assume and do.

Firms unilaterally acquire knowledge from maximum of your actions. This “surveillance capitalism” is frequently unrelated to the products and services tool producers, apps and retail outlets are offering you. For instance, Tinder is making plans to make use of AI to scan all of your digital camera roll. And in spite of their guarantees, “opting out” doesn’t if truth be told forestall firms’ knowledge assortment.

Whilst firms can manipulate you, they can’t put you in prison. However the U.S. executive can, and it now purchases huge amounts of your knowledge from industrial knowledge agents. The federal government is in a position to acquire American citizens’ delicate knowledge for the reason that knowledge it buys is no longer topic to the similar restrictions as knowledge it collects at once.

The government could also be ramping up its talents to at once acquire knowledge via partnerships with non-public tech firms. Those surveillance tech partnerships are turning into entrenched, locally and in a foreign country, as advances in AI take surveillance to remarkable ranges.

As a privateness, digital surveillance and tech regulation lawyer, creator and criminal educator, I’ve spent years researching, writing and advising about privateness and criminal problems associated with surveillance and knowledge use. To grasp the problems, it’s essential to know the way those applied sciences serve as, who collects what knowledge about you, how that knowledge can be utilized in opposition to you, and why the rules you could assume are protective your knowledge don’t practice or are omitted.

Sebastian Willnow/image alliance by the use of Getty Pictures

Giant cash for AI-driven tech and extra knowledge

Congressional investment is supercharging large executive investments in surveillance tech and knowledge analytics pushed by means of AI, which automates research of very huge quantities of knowledge. The huge 2025 tax-and-spending regulation netted the Division of Fatherland Safety an remarkable US$165 billion in annually investment. Immigration and Customs Enforcement, a part of DHS, were given about $86 billion.

Disclosure of paperwork allegedly hacked from Fatherland Safety expose a huge surveillance internet that has all American citizens in its scope.

DHS is increasing its AI surveillance functions with a surge in contracts to personal firms. It’s reportedly investment firms that supply extra AI-automated surveillance in airports; adapters to transform brokers’ telephones into biometric scanners; and an AI platform that acquires all 911 name heart knowledge to construct geospatial warmth maps to expect incident traits. Predicting incident traits generally is a type of predictive policing, which makes use of knowledge to look ahead to the place, when and the way crime might happen.

DHS has additionally spent hundreds of thousands on AI-driven tool used to discover sentiment and emotion in customers’ on-line posts. Have you ever been complaining about Immigration and Customs Enforcement insurance policies on-line? If that is so, social media firms together with Google, Reddit, Discord, and Fb and Instagram proprietor Meta could have despatched figuring out knowledge, corresponding to your identify, e mail cope with, telephone quantity and process, to DHS in line with masses of DHS subpoenas served at the firms.

In the meantime, the Trump management’s nationwide coverage framework for synthetic intelligence, launched on March 20, 2026, urges Congress to make use of grants and tax incentives to fund “wider deployment of AI equipment throughout American trade” and to permit trade and academia to make use of federal datasets to coach AI.

The use of federal datasets this fashion raises privateness regulation considerations as a result of they include a life of delicate main points about you, together with biographical, employment and tax knowledge.

Blurring traces and little oversight

In overseas intelligence paintings, the investment, building and regulated use of positive AI-driven accumulating of knowledge is sensible. The CIA’s new acquisition framework to turbocharge collaboration with the personal sector could also be criminal with correct oversight. However the line between participating for lawful nationwide safety functions as opposed to illegal home spying is turning into dangerously blurred or omitted.

For instance, the Pentagon has declared a contractor, Anthropic, a nationwide safety possibility as a result of Anthropic insisted that its tough agentic AI fashion, Claude, no longer be used for mass home surveillance of American citizens or totally self sustaining guns.

On March 18, 2026, FBI Director Kash Patel showed to Congress that the FBI is purchasing American citizens’ knowledge from knowledge agents, together with location histories, to trace Americans.

As the government speeds up using and funding in AI-driven undercover agent tech, it’s mandating much less oversight round AI generation. Along with the nationwide AI coverage framework, which discourages state law of AI, the president has issued government orders to boost up federal executive adoption of AI programs, take away state regulation AI law obstacles and require that the government no longer procure using AI fashions that try to modify for bias. However the use of complicated AI programs is dangerous, given studies of AI brokers going rogue, exposing delicate knowledge and turning into a danger, even right through regimen duties.

Your knowledge

The surveillance capitalism machine calls for folks to unwittingly take part in a manipulative cycle of group- and self-surveillance. Community doorbell cameras, Flock registration code readers and hyperlocal social media websites like Nextdoor create a crowdsourced document of all folks’s actions in public areas.

Justin Sullivan by the use of Getty Pictures

Sensors in telephones and wearable gadgets, corresponding to earbuds and rings, acquire ever extra delicate main points. Those come with well being knowledge, together with your middle price and middle price variability, blood oxygen, sweat and pressure ranges, behavioral patterns, neurological adjustments or even mind waves. Smartphones can be utilized to diagnose, assess and deal with Parkinson’s illness. Earbuds might be used to observe mind well being.

This information isn’t safe below HIPAA, which prohibits well being care suppliers and the ones running with them from disclosing your well being knowledge with out your permission, for the reason that regulation does no longer believe tech firms to be well being care suppliers nor those wearables to be clinical gadgets.

Criminal protections

Other folks have little selection when purchasing gadgets, the use of apps or opening accounts however to conform to long phrases that come with consent for corporations to assemble and promote their private knowledge. This “consent” lets in their knowledge to finally end up within the in large part unregulated industrial knowledge marketplace.

The executive claims it will possibly lawfully acquire this information from knowledge agents. However in purchasing your knowledge in bulk at the industrial marketplace, the federal government is circumventing the Charter, Ultimate Court docket choices and federal rules designed to give protection to your privateness from unwarranted executive overreach.

The Fourth Modification prohibits unreasonable seek and seizure by means of the federal government. Ultimate Court docket instances require police to get a warrant to seek a telephone or use mobile or GPS location knowledge to trace anyone. The Digital Communications Privateness Act’s Wiretap Act prohibits unauthorized interception of cord, oral and digital communications.

In spite of some efforts, Congress has did not enact law to offer protection to knowledge privateness, the use of delicate knowledge by means of AI programs or to revive the intent of the Digital Communications Privateness Act. Courts have allowed the wide digital privateness protections within the federal Wiretap Act to be eviscerated by means of firms claiming consent.

For my part, start to cope with those issues is to revive the Wiretap Act and similar rules to their supposed functions of shielding American citizens’ privateness in communications, and for Congress to practice via on its guarantees and efforts by means of passing law that secures American citizens’ knowledge privateness and protects them from AI harms.

This text is a part of a collection on knowledge privateness that explores who collects your knowledge, what and the way they acquire, who sells and buys your knowledge, what all of them do with it, and what you’ll be able to do about it.